The United States government has drawn up strict guidelines for civilian contracts with artificial intelligence companies that would order the firms to allow “any lawful use” of their models by the US military, according to a Financial Times report.

The new rules come after the Pentagon’s very public clash with Claude AI maker Anthropic over the potential use of its models for automated weapons and mass domestic surveillance programs. The battle culminated in the War Department designating the CEO of the Dario Amodei-led company a Supply Chain Risk (SCR) and barring it from all defense and related deals.

Read also | Pentagon vs Anthropic: America’s top official begins a clash with an AI firm

The rules are aimed at strengthening the procurement of AI services for the US government, he added. The source also told the publication that the US War Department is considering similar terms for its future military contracts.

The US General Services Administration (GSA), which procures software for the federal government, will “require further comments” from industry before the new rules are enforced, a source told the FT.

Trump admin draws ‘tough rules’ for civil AI contracts

AI entities that want to do business with the US government will have to grant the government an irrevocable license to use their models for all legal purposes, according to the report, which cited draft new guidance from the GSA.

Read also | Anthropic CEO Dario Amodei apologizes for leaked memo criticizing Trump

The new rules would also require suppliers to provide “a neutral, unbiased tool that does not manipulate responses in favor of ideological dogmas such as diversity, equality, inclusion.” The phrase draws on US President Donald Trump’s executive order against alleged “awakened” models of artificial intelligence.

The source added that the wording of the clause could also call into question compliance with the EU Digital Services Act, as it mandates models to disclose whether their models have been “modified or configured to comply with any non-US federal government or commercial compliance or regulatory framework,” the report added.

Pentagon vs Anthropic: “Non-Repressive Action”

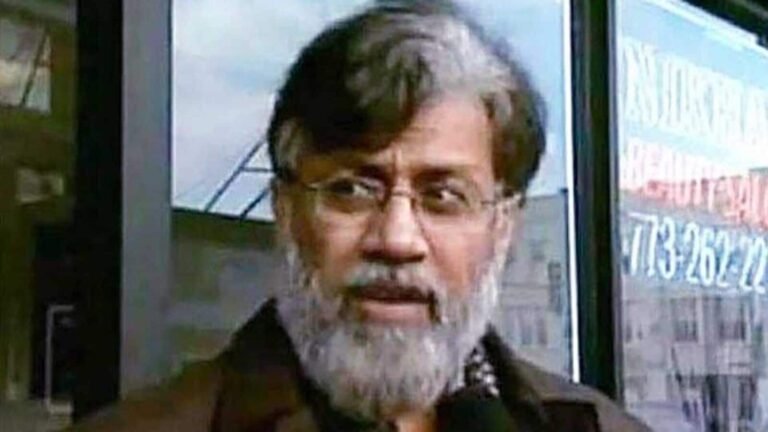

US Assistant Secretary of War Emil Michael said in a podcast that Antropic’s Supply Chain Risk (SCR) designation came after the company failed to meet the Pentagon’s requirement for “all lawful uses” of the technology.

Read also | March 7 flight status LIVE: IndiGo announces five flights to the Middle East today

Michael justified the move by saying that the War Department’s discussions with Anthropic leading up to the dropout included requests for Trump’s Golden Dome proposal, battle scenarios against Chinese hypersonic missiles and drone swarms.

Michael said that the Anthropic and Amodei codes of ethics are not aligned with the needs of the government. “I need a reliable and stable partner who will give me something, who will work with me on autonomy, because one day it will be real and we are starting to see earlier versions of it. I need someone who will not pull out in the middle,” he said.

Read also | Public holidays: Are banks 7-8 March closed for the weekend?

He also refused to call the move punitive, saying, “I don’t see it as punitive. If their model has that political bias, I don’t want Lockheed Martin using their model to design weapons for me… I can’t have it because I don’t believe what the outputs can be because they’re so beholden to their own political preferences,” he said.

Anthropic to fight the SCR label in court

The DoW said on Thursday it had designated Anthropic as an SCR, and Amodei confirmed the same in a blog post, saying the action lacked a legal basis.

“Yesterday (March 4th), Anthropic received a letter from the Department of War confirming that we have been designated a supply chain risk to US national security. We do not believe this action is legally correct and see no option but to challenge it in court,” he wrote.

Key things

- The US government is tightening regulations on AI companies to ensure military practices.

- Companies like Anthropic face challenges if they don’t comply with government requirements.

- The guidelines may affect compliance with international regulations such as the EU Digital Services Act.