Artificial intelligence company Anthropic said last month it would limit the release of its latest AI system to a small number of organizations, including a handful of big tech companies like Microsoft and Google and groups that manage important parts of the Internet.

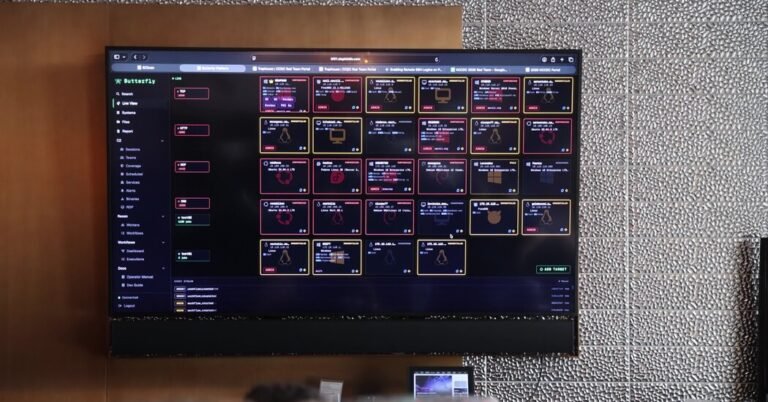

The new system, called Claude Mythos, was too powerful to share with the general public, Anthropic said, because hackers could use it to exploit security holes in computer networks at an astonishing rate.

Silicon Valley executives and officials in Washington were concerned about what Mythos could do, and its release may have helped shake the Trump administration from its defense of AI against government regulation.

The White House is now considering government oversight of new AI models through an executive order that would create an AI task force made up of technology executives and government officials to explore potential oversight practices. Possible plans include a formal government review process for new AI models.

But more than a month after Mythos’ release, cybersecurity experts still disagree on whether Anthropic made the right call. Some applaud the company for limiting who gets their hands on Mythos. Others criticize Anthropic for not sharing it with a wider group of researchers who could try it out and see what it can and can’t do. So far, the only consensus seems to be that there is no consensus on the Mythos.

Anthropic has shared the technology with about 40 organizations that maintain critical computing infrastructure so they can use the system to patch security vulnerabilities before hackers exploit them.

Only a handful of groups or companies that have spent time using Mythos would discuss it with The New York Times. But companies and researchers who didn’t have access were happy to offer their thoughts on how Anthropic released its new A.I.

Their feedback so far has ranged from serious concerns to shrugs. It may take some time for the wider tech community to come to a conclusion on whether Anthropic was right to limit Mythos releases – a challenge that Anthropic management acknowledges.

“For a capability like this — or for a model as powerful as this — it’s kind of an unprecedented situation where we really don’t have all the answers,” Logan Graham, head of Anthropic’s Frontier Red team, which evaluates Claude for risk, said in an interview. “We really don’t know the best way to implement such models.”

Experts can look at the same situation and come to very different conclusions due to the inherently complex nature of cybersecurity. People can use systems like Mythos to attack computer networks, but they can also use them to defend against attacks. For decades, people have argued about how best to manage this dual nature.

Most experts agree that AI technologies like Mythos are fundamentally changing cybersecurity. The change gained momentum about six months ago, when Anthropic and its main rival, OpenAI, released new systems that are particularly good at writing computer code. If an AI system can write code, it can potentially find and exploit vulnerabilities in software applications.

When Anthropic revealed Mythos, the company said it had used the technology to find thousands of security vulnerabilities that had gone undetected in popular software systems for years. Anthropic also reported that Mythos was better at identifying disparate security flaws and grouping them into “mining chains,” which are used by malicious hackers to exploit multiple security holes in a coordinated attack. In the company’s words, the technology represented a “step change” in what was possible with AI

Cisco, a computer hardware and software company, is one of the companies using Mythos. Anthony Grieco, the company’s senior vice president and chief security and trust officer, said the technology is significantly more powerful than existing systems in certain areas.

Companies like Cisco, he said, should be “super aggressive in how we use this technology to identify vulnerabilities, fix them, and get those fixes into our customers’ hands as quickly as possible.”

He said Mythos was actually better at identifying exploit chains. But he added that these skills can be used to defend a computer network, not just to attack it. “We use this capability to help triage vulnerabilities and understand which ones are important to fix, so this capability has a really positive connotation in a defense context as well,” he said.

That’s exactly why some cybersecurity researchers say Anthropic should release its system more widely. Like any other cybersecurity tool, it is good for both offense and defense.

“This technology is not too dangerous to release,” said Gary McGraw, a veteran security and artificial intelligence researcher. “If you don’t release a tool like this — or collect it — you’re not solving the real problem.”

Soon after Anthropic’s announcement, independent researchers showed that existing AI systems could find the same security holes that Mythos found. Some cybersecurity experts have argued that Anthropic exaggerated the dangers of Mythos.

For Pavel Gurvich, co-founder and CEO of security company Tenzai, part of the problem is that independent cybersecurity experts are unable to test the system and gain a full understanding of its strengths and weaknesses. This understanding can help them defend against attacks from technology.

“I don’t think choosing to share a model with such a small subset of companies is going to help us move forward,” Mr. Gurvich said. “This is especially true because the announcement was accompanied by very bold claims that we cannot judge.”

A week after Anthropic revealed Mythos, its competitor OpenAI said it was also sharing similar technology with only a group of partners. But the company shared its model, GPT-5.4-Cyber, with a much larger group. She said she will initially share the model with hundreds of organizations and then release it to thousands more partners in the coming weeks.

(The Times sued OpenAI and Microsoft in 2023 for copyright infringement of news content related to AI systems. Both companies have denied the claims.)

Mr. Gurvich said the approach “made more sense,” in part because OpenAI has said that when it shares its technology, it will work to verify users’ identities in an effort to prevent abuse.

Stanislav Fort, a former anthropic researcher who now runs a security company called Aisle, said keeping AI technology contained will not be possible in the long term because so many tech giants, startups and independent developers are building powerful systems. Many of these organizations “open source” their AI, allowing anyone to use and modify the underlying technology.

As time goes on, he added, the widespread sharing of these technologies will be critical to cybersecurity.

“Security using the unknown is one of the oldest bad ideas in this field,” he said.