On a recent Friday morning, seven cybersecurity veterans gathered in a suite on the 60th floor of the Cosmopolitan Hotel in Las Vegas.

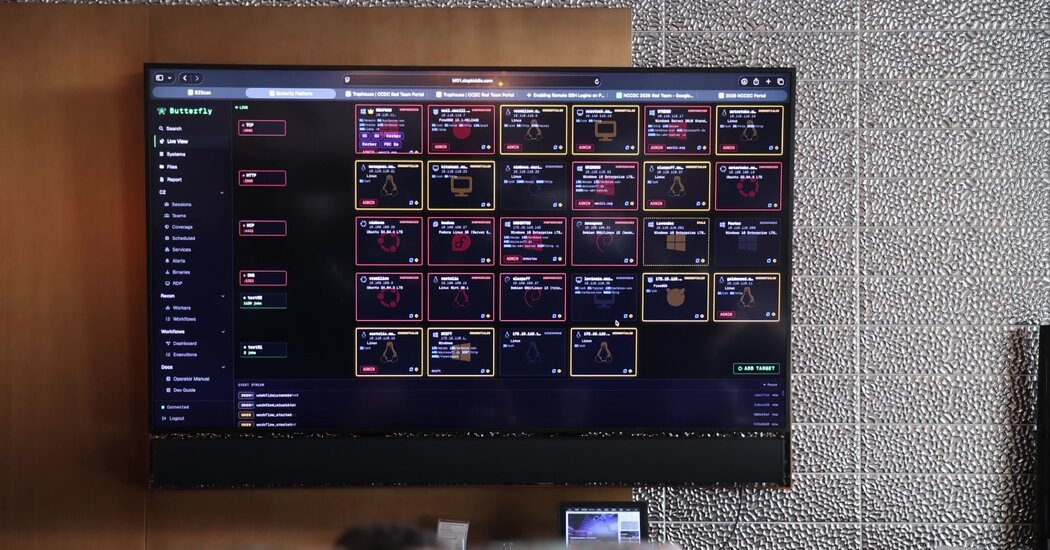

Surrounded by laptops, network cables, spare Wi-Fi antennas, and a wall-mounted television that doubled as a giant computer screen filled with esoteric programming code, they spent the next two days hacking into San Antonio’s computer network as part of an annual event called the National Collegiate Cyber Defense Competition.

When this “red team” of cyber security experts attacked the network, dozens of elite computer science students sat in makeshift command centers around the country trying to stop them.

“Whenever we gain access to their machines and steal data, they lose points,” said Alex Levinson, one of the leaders of the red team. “And we expect to attack with our own malware – something unique and special that they’ve never seen before.”

Hosted by the University of Texas at San Antonio, the event welcomed 10 college “blue teams,” each of which won a regional competition earlier in the year. This elaborate competition was intended to simulate a high-stakes world of cyber warfare, which meant it included a new entrant: artificial intelligence. And one of the blue teams was composed entirely of so-called AI agents who worked mostly alone.

As artificial intelligence will play an increasingly important role in cybersecurity, an elaborate hacking competition has demonstrated both the power and limitations of these systems. They can help attack computer networks. And they can help defend. But they are also prone to error. And they still can’t match the skills of seasoned cybersecurity professionals—or even the nation’s most promising computer science students.

But AI companies continue to improve these technologies. Anthropic said last month it would limit the release of its latest AI technology, Claude Mythos, to a small number of trusted organizations because it could provide a new advantage to malicious hackers. OpenAI also later said it would share similar technology with a limited group of partners.

Crouched over a glass table in the Cosmopolitan suite, one of the red team’s veterans, Dan Borges, typed out an expanding list of instructions for the AI agents running on his laptop. As they explored the network in San Antonio and attacked one of the collegiate blue teams, the bots ran errands on his behalf.

Mr. Borges, a 37-year-old security engineer whose resume includes stints at Uber and AI start-up Scale AI, wore a baseball cap backwards over dark brown hair that reached halfway down his back. The cap read, “Aloha Got Soul.”

That morning, he tried to push malicious software onto several dozen computers on the network. As his agents raced through this largely repetitive task, he planned the next phase of the attack. “They help me do things in parallel,” he said. “I can go fast and I can go wide.

But soon one of his bots took an unexpected turn: it started installing malicious software on his own computer. The bot decided it was a good way to understand what malware could do. “Absolutely the worst idea I’ve ever heard,” Mr. Borges said, laughing.

When led by trained experts like him, Mr. Borges said, these technologies can accelerate a wide range of cybersecurity tasks. But he still struggles with their shortcomings.

“It’s very easy to ask them to do something,” he said. “But you have to step back and say, ‘What’s the best way to get them to do what I want them to do?’

Before the meeting in Las Vegas, Mr. Borges and other members of the red team spent weeks creating custom software tools to use during two days of simulated cyber warfare. Most of them used systems like Anthropic’s Claude Code and OpenAI’s Codex to build these tools faster. Rather than writing all the code by hand, they could rely on the help of AI code generators.

“A lot of what we do before the event — a lot of the development work — is what makes or breaks us during the event itself,” Mr. Levinson said. “Our capabilities have improved because artificial intelligence is now helping with that.

Others, like Mr. Borges, went a step further and used the same systems to automate tasks during the competition itself as they listened to 90s hip-hop and tried to crack the net in San Antonio. Because these systems can generate code, they can use websites, probe computer firewalls, and potentially perform almost any other task on the Internet.

Two members of the red team—David Cowen and Evan Anderson—were sitting in front of a giant wall-mounted television and casually asked Claude Code to organize and execute elaborate attacks with names like Project Mayhem. They leaned so heavily on technology that they sometimes left the suite for sandwiches and coffee while Claude continued to survey the Texas network.

Mr. Cowen, a jovial security consultant from Plano, Texas, with a flowing gray-brown beard, chuckled every time the AI robots did something unexpected. Mr. Anderson, a hacker with tattoos on both arms who runs the Denver security company Offensive Context, never blinked an eye.

One afternoon, after returning from lunch, Mr. Cowen looked up at the television screen and chuckled again. While he was getting fried chicken sandwiches, one of his robots noticed that the blue team had uploaded new software to the machine in San Antonio. The bot then retrieved the software’s default password from the database, hacked into the machine, and began sharing the password with other bots. “Amazing,” Mr. Cowen said, laughing. “I was at lunch.

But he was quick to say that robots are only as good as the good people who use them. He and Mr. Anderson kept their agents on a tight leash, focusing their efforts on specific tasks and trying to catch any serious mistakes.

Sometimes the bots “hallucinated” network activity, meaning they reacted to events that didn’t actually happen.

“The AI thinks it’s done a lot of things, and it’s telling us it’s done a lot of things,” Mr Anderson said. “That sounds great, but you have to get it to show that what did it actually exists.

Two cybersecurity veterans fought fire. While the other members of the red team attacked the blue teams full of college students, Mr. Cowen and Mr. Anderson battled the blue team, which was staffed only by robots. In this year’s competition, Anthropic arranged for its AI technology to compete alongside 10 teams of college students.

This automated cyber defense team operated with little help from Anthropic staff. But while the college teams each included eight students, the Antropy team included up to 32 individual AI agents.

During the first few hours of the competition, the bots seemed to struggle and fell to the very bottom of the leaderboard. But they were slowed down by a network outage. Once the net was back, they started to stick.

“One thing that AI is good at and students are not is paying attention to multiple things at once,” Mr Cowen said. “Agents communicate better – and never give up.”

They also behaved in strange and unexpected ways, sometimes failing to take the obvious steps needed to defend their machines. Sometimes the bots got stuck in ruts, which meant their human guardians had to intervene.

In the end, however, the bots finished seventh out of 11 teams. The winner was Dakota State University, a perennial contender but first-time champion.

Having watched the technology perform on both sides – offensive and defensive – Mr Anderson said it remains a tool that is most effective in the hands of experienced cyber security professionals. It works best, he said, when it follows strict guidelines.

“I’ve had it in the past, so I look for certain things to happen and then I do the next thing I want it to do,” he said.

Although Mr Cowen doesn’t trust AI technology to make decisions on its own, he thinks that will change as the systems get better and better.

“It will get there,” he added.